(Written by Arav Misra and Computational Work by Aaron Conover)

This summer, the Sarah Lawrence Summer Science Program was cancelled due to COVID-19. However, that didn’t stop some intrepid students from tackling some research problems remotely, with posts on Slack and occasionally Zoom meetings with Merideth to debug issues or discuss next steps.

Below are summaries of two projects worked on by rising high-school senior, Arav Misra, and rising undergraduate senior, Aaron Conover.

Rotational System for Gradient

In order to be able to adjust the gradient’s positioning, and thus the magnetic field, we needed to have a system which could accurately rotate the gradient to specified degree intervals. Using an Arduino Servo motor, we could specify explicit rotations of the gradient.

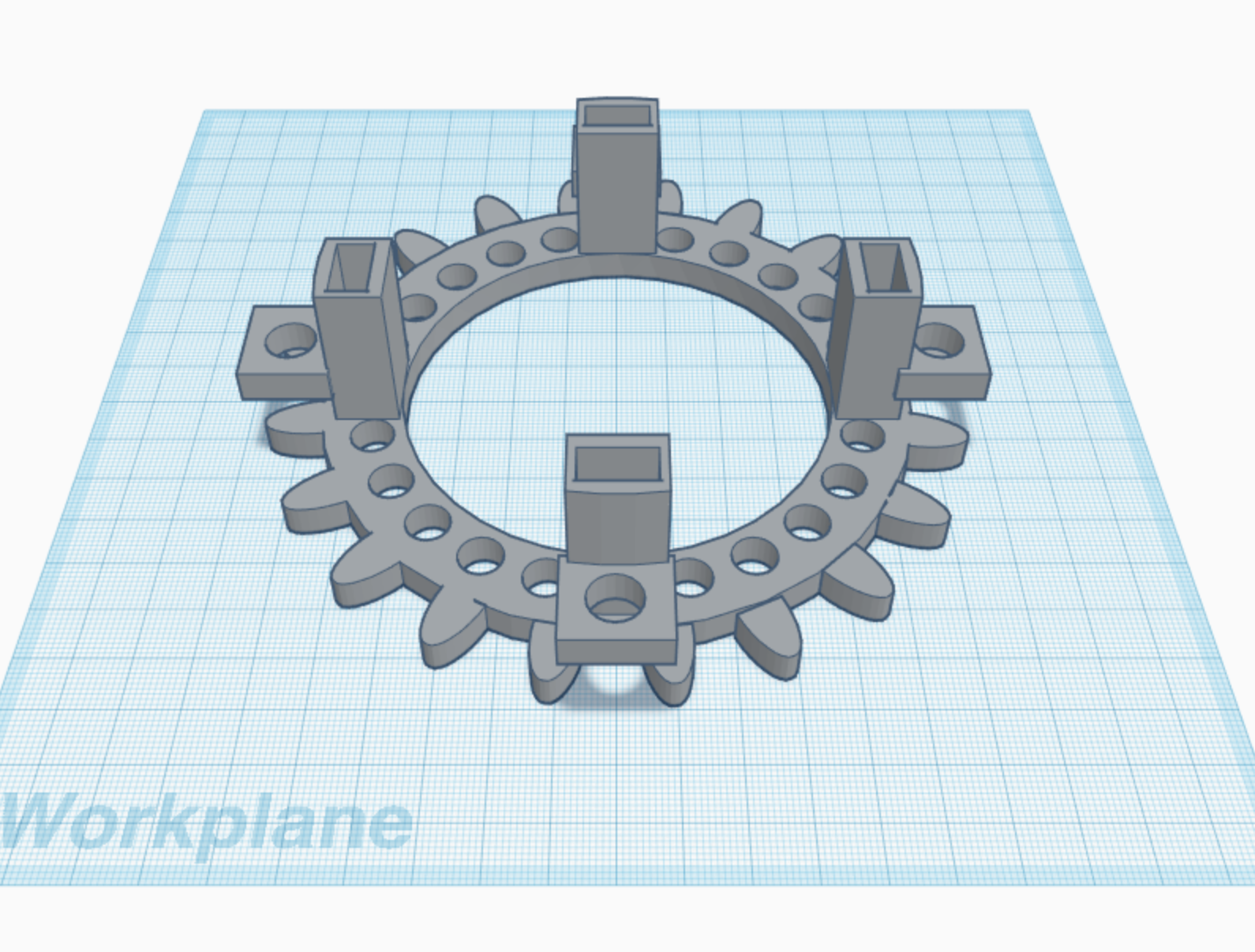

I designed a gear system to surround the exterior of the gradient, along with small protrusions with holes at each quadrantal angle.

The holes are .25 inches in diameter, and will be used to connect two gradients with teflon bolts. By connecting the two gradients, the gear system will be able to simultaneously rotate the upper and lower gradient without the need of a second Arduino servo motor.

The holes are .25 inches in diameter, and will be used to connect two gradients with teflon bolts. By connecting the two gradients, the gear system will be able to simultaneously rotate the upper and lower gradient without the need of a second Arduino servo motor.

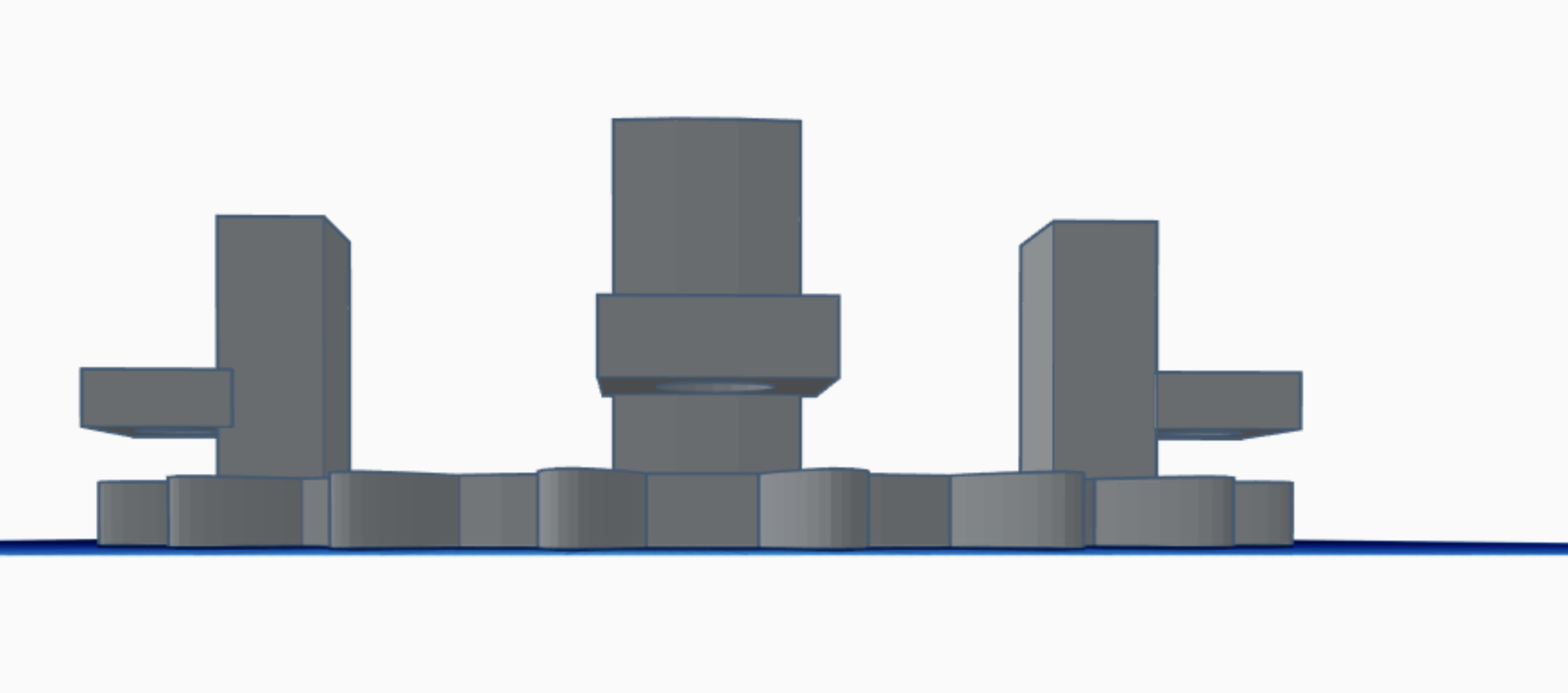

Side View

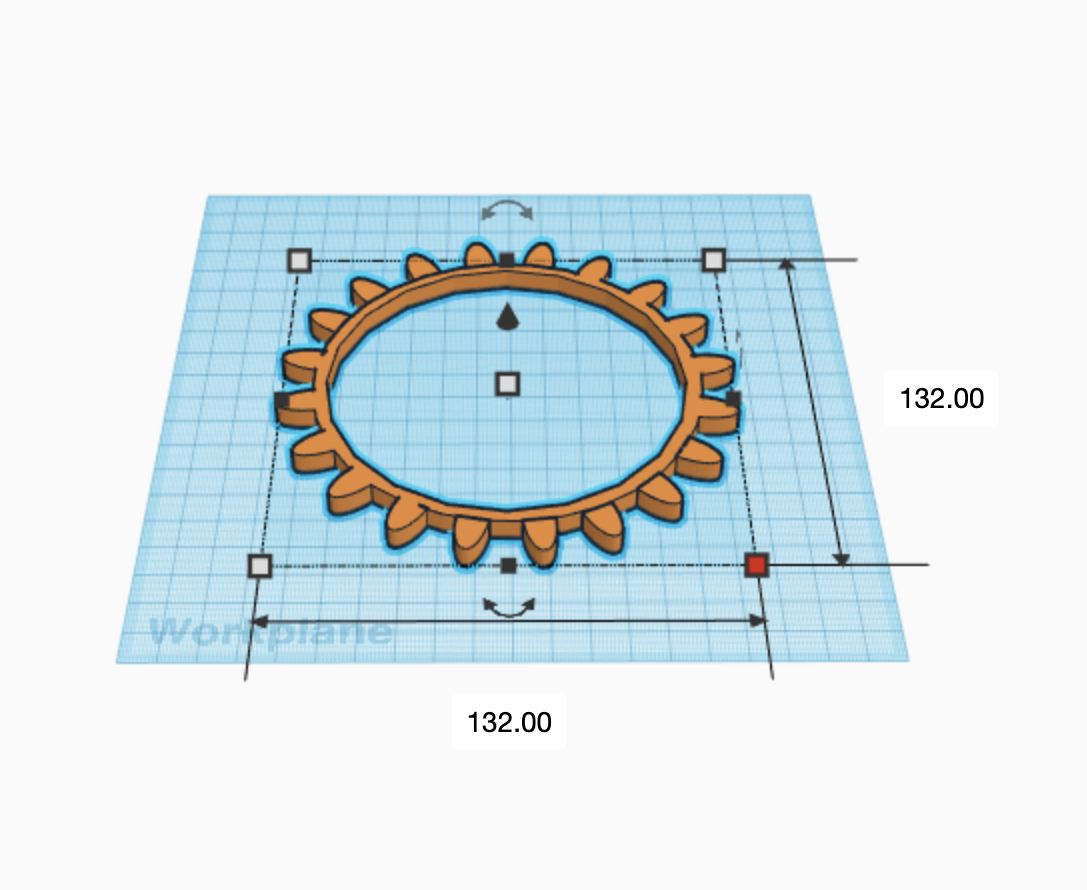

Gear Unattached to Gradient

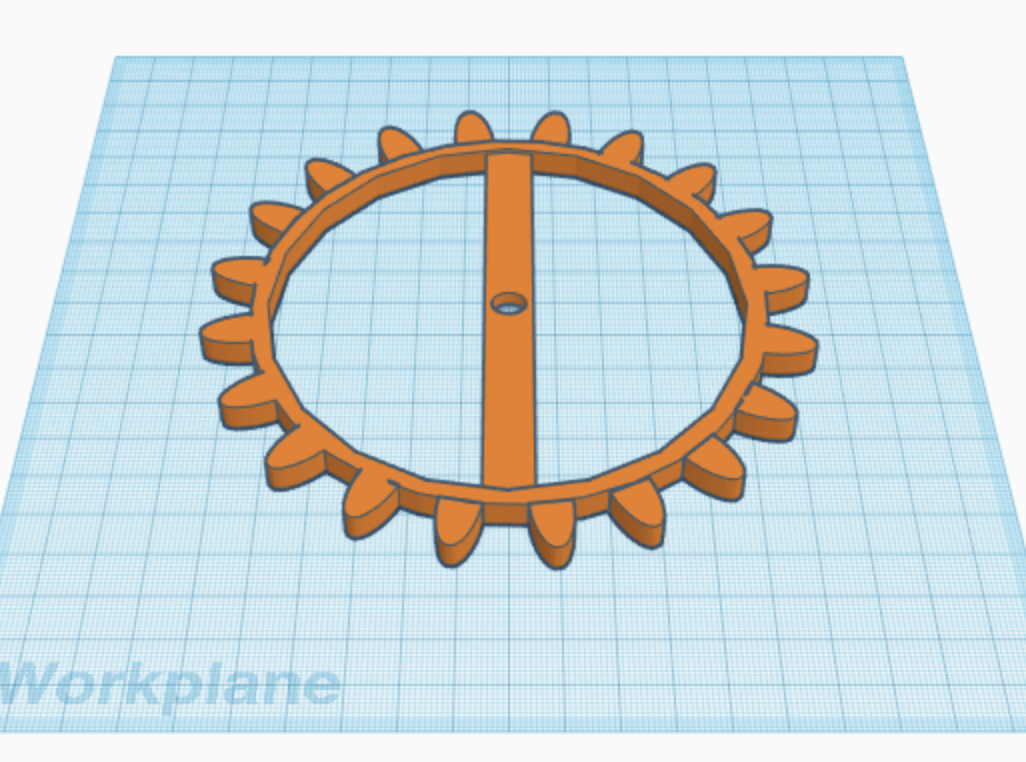

Servo Motor Gear

In the picture above, there is a similar apparatus to the gear, with an added band & hole. The hole is sized to fit the rotational motor component of the servo, so that when given instructions, the gear will turn accordingly.

Finally, the gear’s teeth were modified from an original shape to a more rounded, spaced out design. This not only allowed for smoother turning, but will hopefully save 3D-Printing material as well.

Once these components have been 3D-printed and tested, we’ll post all the relevant files to Thingiverse, so stay tuned.

In the picture above, there is a similar apparatus to the gear, with an added band & hole. The hole is sized to fit the rotational motor component of the servo, so that when given instructions, the gear will turn accordingly.

Finally, the gear’s teeth were modified from an original shape to a more rounded, spaced out design. This not only allowed for smoother turning, but will hopefully save 3D-Printing material as well.

Once these components have been 3D-printed and tested, we’ll post all the relevant files to Thingiverse, so stay tuned.

Studying the Effectiveness of Our Optimization Algorithm

Aaron Conover was charged with looking into the effectiveness of our optimization algorithm from last summer. We wanted to assess how well this optimization algorithm is helping address variances across the magnetic field strength across the neodymium magnets we are using. Experimentally, we found a ~20x improvement in field homogeneity simply by changing the magnet positions according to our new optimization algorithm, and we wanted to quantify how much better we expect our optimization algorithm to improve magnetic field homogeneity compared with randomly placing magnets with some set standard deviation of varying magnetic field strengths.

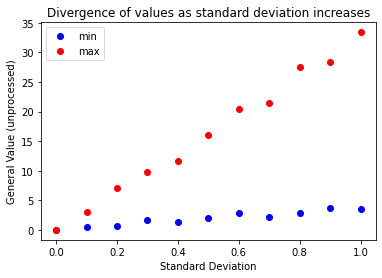

Our optimization algorithm takes a vector of magnetic field strengths for each magnet to be placed in the NMR MANDHALA and tries all the different permutations to determine the optimal placements, as determined by the algorithm’s cost function. Aaron wrote a function that would create a set of ‘magnets’ that had a distribution of magnetic field strengths with the same mean value, but an inputted standard deviation. Then he ran our optimization algorithm for different randomly generated sets of magnets for different standard deviations, and watched how the optimization parameter for different permutations varied with increasing the standard deviations of the magnets. We just saved the data for the least optimized permutation (roughly what you would expect if you just randomly placed magnets without any optimization procedure - denoted by ‘max’ in figure below) and compared this with the optimized permutation of the magnets (denotes by ‘min’ in the figure below). For the final value of the optimization parameter (denoted as ‘general value’ and plotted along the y axis in the figure below), the lower the better, since it directly correlates to the overall magnetic field inhomogeneity.

An example of the optimization results you get running the program for a single randomly generated set of magnets at each standard deviation is shown below.

As you would expect, as the standard deviation in the magnetic field strength of the magnets gets larger, the effective field inhomogeneity of the NMR MANDHALA gets larger. However, the optimized permutation’s effective field inhomogeneity rises less quickly compared to the non-optimized permutation of magnets. This demonstrates that our optimization algorithm is indeed helping counteract the effects of magnetic field variances in our magnets. Any remaining magnetic field inhomogeneity when there is zero variance across the magnet sets must be a result of the fact that we are using discrete magnets to mimic an ideal dipole field, and we can not correct for that without changing the geometry of the NMR MANDHALA itself.

For a nice annotated Jupyter notebook providing the code Aaron used for his simulations to test our optimization algorithm, see the AggregatedMagnetVariance.ipynb in the `SLC Research’ GitHub repository.

Possible future steps:

Currently, our optimization parameter is looking at the difference between the magnitude of the magnetic field at different test points inside the MANDHALA and the theoretical estimate of the magnetic field strength at the center of the MANDHALA if all magnets had exactly the same mean magnetic field strength. This takes all components of the magnetic field into account. In MRI, we typically only care about the homogeneity in the Z direction of the magnetic field (since, presumably, the field strength in the x and y directions are pretty small, so any Larmor precessions would be at frequency well outside of our bandwidth.) Perhaps optimizing looking only at the Z components of the magnetic field might result in even more of the Bz magnetic field homogeneity desired.

We can also expand our optimization algorithm to do BOTH sets of MANDHALAS at the same time, to find potentially even better configurations where optimized magnet placements in the center ring can help correct for magnet field inhomogeneities caused by the outer ring, and vice-versa.